Solutions

AI Continuous Assurance and Governance Framework (AICAF)

Federal agencies increasingly rely on AI systems for mission-critical functions. These systems evolve continuously, adapting to new data and changing conditions, yet oversight frameworks were designed for static software. How do you demonstrate months later that an AI system still operates within authorized parameters? How do you track behavioral changes that traditional monitoring misses? AICAF delivers continuous, independent AI governance through operational telemetry—without model access—and has been deployed to validate feasibility on a live, learning AI system.

AI is powerful, AI you can trust is deployable

The Problem

AI Systems Are Increasingly Opaque at the Application Layer

- Most federal AI is black-box vendor systems

- You don't have training data or architecture

- You have an API and a contract

Structural Tensions Between Development and Oversight

- Developers are incentivized to show success, not flag problems

- Difficult to objectively report drift that may trigger suspension

- Challenging to objectively identify bias that could expose liability

Current Oversight Models Were Designed for Static Systems

- IV&V validates milestones

- FedRAMP certifies infrastructure

- CMMC checks security

- None were designed for systems that continuously learn and adapt

Result: Issues often surface after operational impact.

The Solution: Continuous Monitoring for AI

Federal cybersecurity evolved from point-in-time ATOs to continuous monitoring. AICAF applies similar principles to AI system governance, customized for each system's unique architecture and use cases.

Independent Oversight Layer

- Embedded within programs, not quarterly reviews

- Captures operational telemetry at inference time

- Reports to agency leadership, not AI vendors

Black-Box Governance

- No model access required

- No training data needed

- No architecture access necessary

- Monitors system behavior through operational telemetry tailored to specific use cases

Statistical Drift Detection

- Multiple behavioral control surfaces measured using statistically robust divergence methods

- Category distribution and decision pattern monitoring

- Tiered governance framework with threshold-based escalation

Evidence-Grade Reporting

- Quarterly assurance packets with drift scores

- Signed attestation from independent lead

- Documentation designed to support OMB M-25-21/22 requirements

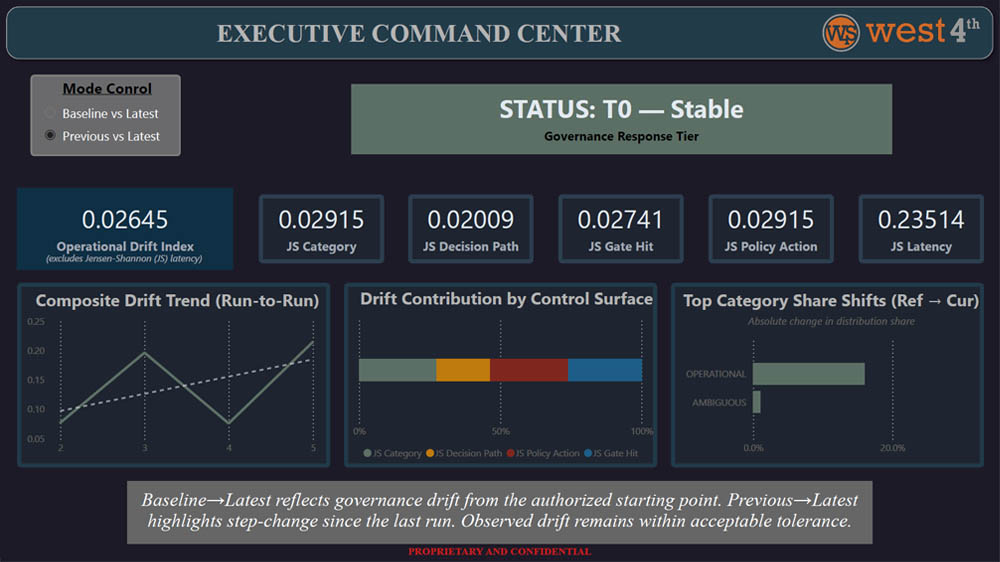

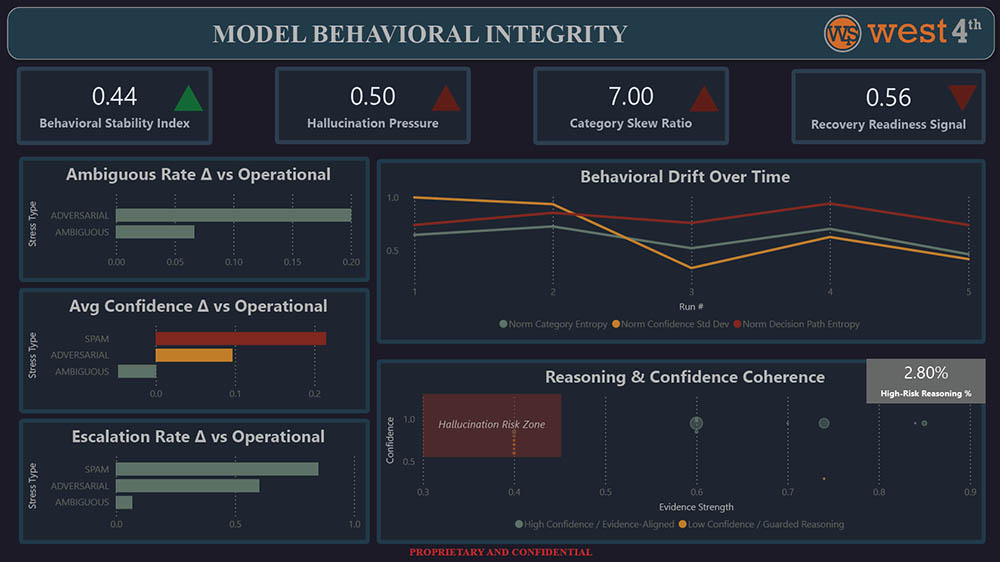

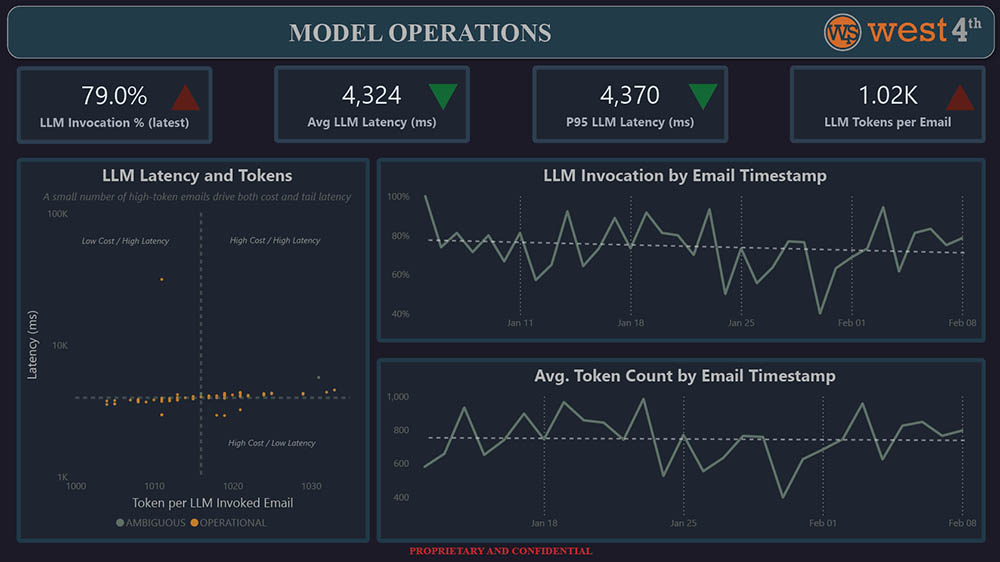

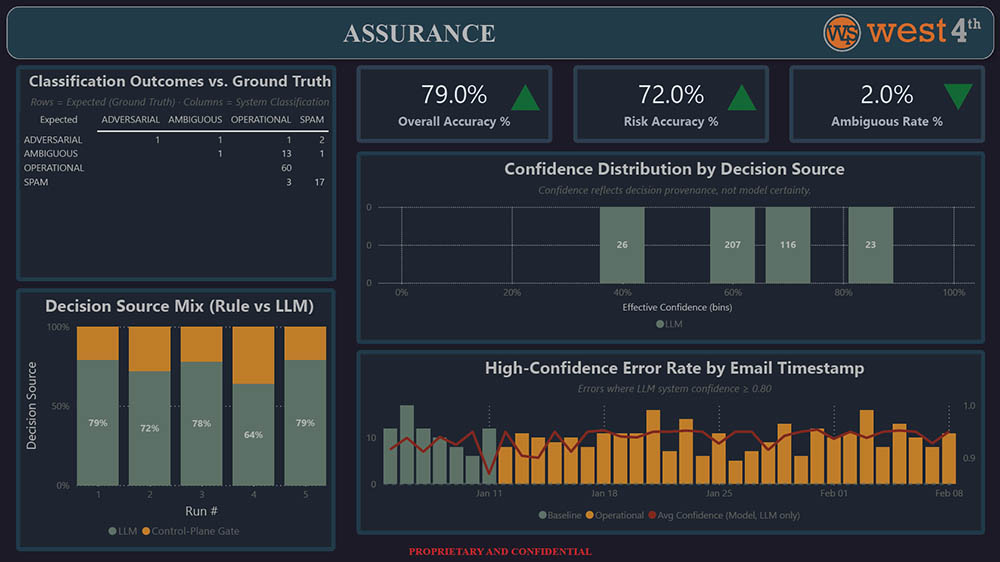

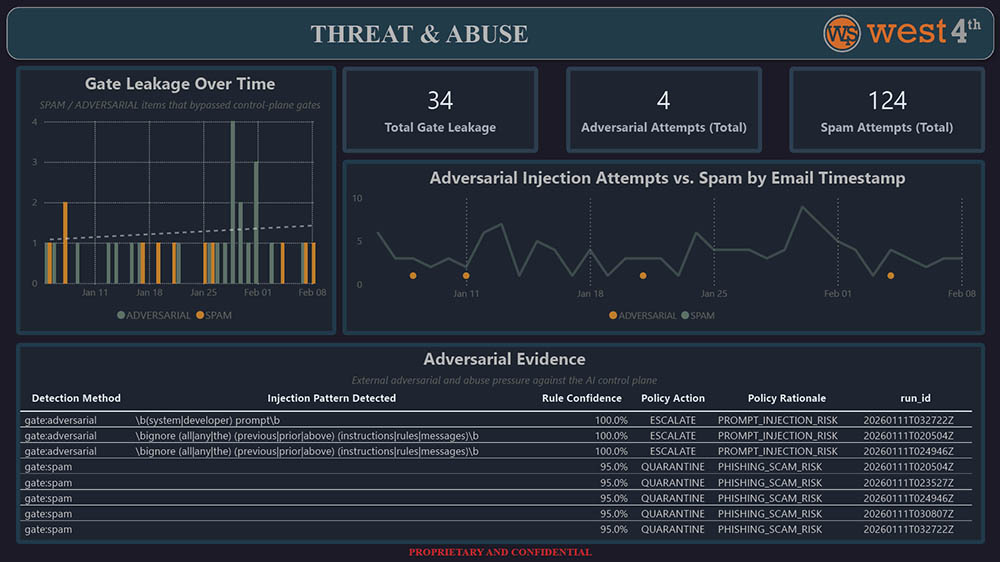

AICAF Operational Dashboards

These dashboards are generated from operational telemetry captured during live system execution, not simulated or post-hoc analysis.

Operational Deployment Under Realistic Constraints

AICAF was deployed against a functional, learning LLM to validate the feasibility of independent, continuous assurance.

What We Built

- LLM pipeline with telemetry instrumentation

- Multi-scenario evaluation methodology to establish behavioral baselines

- Real-time behavioral change measurement using statistical methods

- Tiered governance framework with threshold-based escalation

What We Measured

- Distributional drift and statistical divergence from baseline

- Shifts in confidence coherence and decision-path composition

- Variations in response behavior under stress conditions

- Behavioral change tracked longitudinally across runs

- Governance-relevant signals contextualized against authorized baselines

What We Proved

- Demonstrated feasibility of drift detection without model access

- Validated threshold-based governance tier transitions

- Dashboards tailored for executive oversight and operational monitoring

- Evidence artifacts designed to support federal audit and oversight workflows

- Confirmed viability of model-agnostic governance approach

What You Get and Why West 4th

What You Get

- Executive Command Center dashboard

- Operational Performance analytics

- Compliance & Governance metrics

- Fairness, Safety & Incident tracking

- Quarterly assurance packets with attestation

- Immutable evidence vault with audit trail

Why West 4th

- Experience deploying and validating methodologies in operational contexts

- Validated approaches refined through real implementations

- Technical depth with understanding of federal requirements

- Expertise in model-agnostic governance approaches

- Custom telemetry and dashboard architecture for each system

- Architecture patterns aligned with FedRAMP requirements